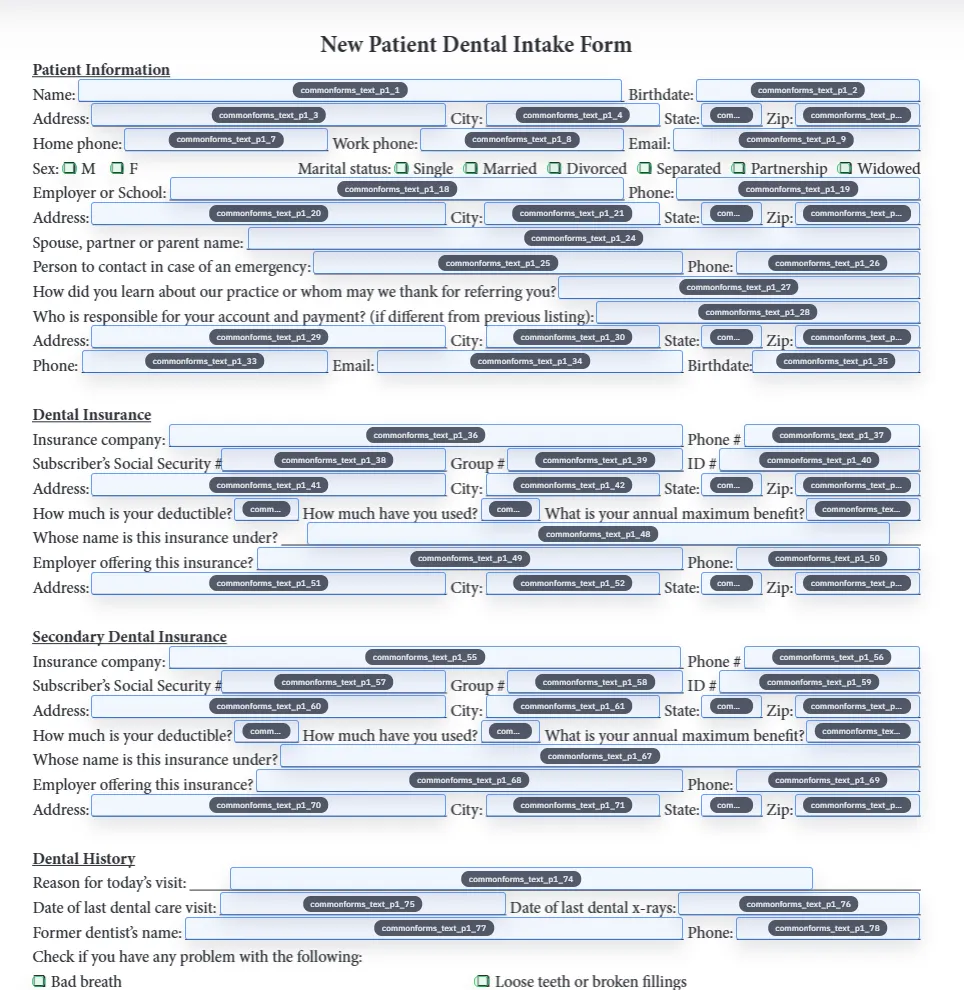

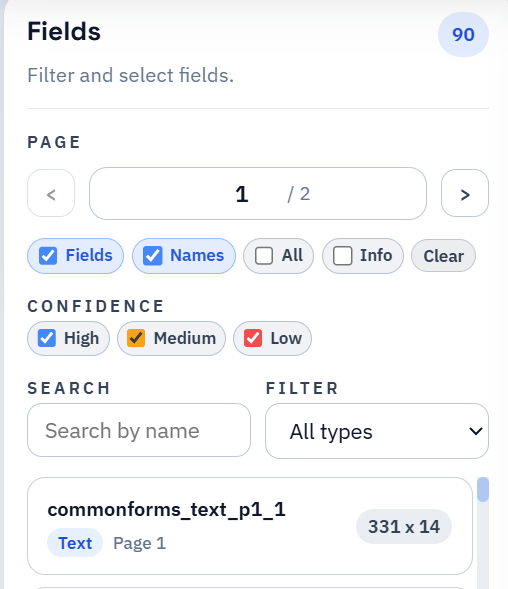

Adobe Acrobat ships AI Form Detection. Apryse (formerly PDFTron) sells a Form Field Detection capability inside their SDK. AWS Textract has a forms feature. None of these vendors publish a head-to-head accuracy benchmark on a public dataset. That is unusual for a category that markets on accuracy claims, and it is the reason most evaluation today happens by uploading one or two test PDFs and eyeballing the results.

CommonForms changes that. The CommonForms paper — Joe Barrow, arXiv:2509.16506, published in 2025 — releases both a large public benchmark dataset and the trained models that run on it. Anyone can download the benchmark, run any commercial detector on it, and compare. The same paper reports FFDNet outperforming a popular commercial PDF reader on this benchmark.

DullyPDF runs the actual FFDNet-Large model from that paper as its detection backbone. We did not retrain it, did not fork it, and did not modify the inference pipeline. The detector you use in DullyPDF is the same detector the published benchmark numbers describe.